In the first part of this series on SharePoint 2010 infrastructure considerations for Amazon EC2, I introduced the AWS platform and took a closer look at storage, snapshots and provisioning. In the second post I moved on to networking and cloning. In this third post I will discuss administration, delegation and licensing.

Other posts in this series

- SharePoint 2010 Infrastructure for Amazon EC2 Part I: Storage and Provisioning

- SharePoint 2010 Infrastructure for Amazon EC2 Part II: Cloning and Networking

- SharePoint 2010 Infrastructure for Amazon EC2 Part III: Administration, Delegation and Licensing

- SharePoint 2010 Infrastructure for Amazon EC2 Part IV: Cost Analysis

- Amazon VPC and VM Import Updates

Administration, Delegation and Usage Costs

The Tools

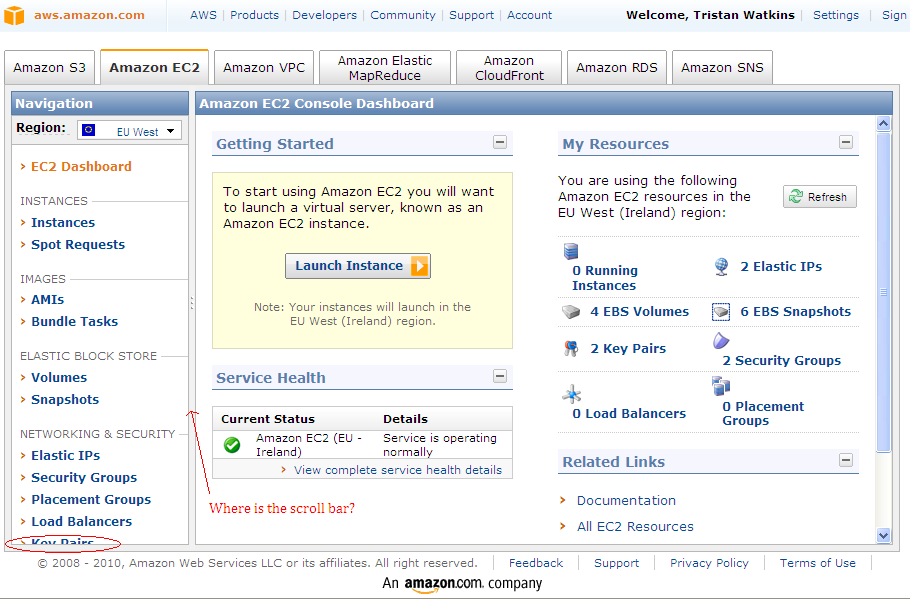

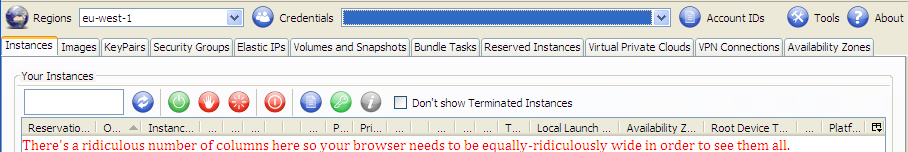

Unfortunately, the AWS Management Console user experience is fairly hideous. It doesn’t size properly in the browser and it has annoying synchronous post-back behaviours. It generally feels like an enormous Java app. I’m reminded of Cisco administration consoles circa the early part of this century. However, there is an Add-on for Firefox called ElasticFox which improves things a bit, but I wouldn’t say I’m thrilled with it either. I would classify it as less clunky, but I’d hesitate to go much further.

My colleague Brendan Newell co-evaluated Amazon Web Services with me. He identified we would need a more sophisticated management tool very early on. He found LabSlice and we looked at that for a bit. It’s fairly basic, but it adds some functionality that makes it compelling by comparison: policies, delegation and reporting. Those features provide administrative controls for smart delegation, or at least a start towards that control. It is a new product, so it’s reasonable to expect that it will improve. If we ever use AWS in anger, LabSlice or a tool like it will almost certainly form a part of the picture unless the Amazon administrative tools improve by then.

Why are these added features so important?

The underlying issue is that it’s more than twice as expensive to run an instance 24/7 than at 40 hours/week (at on-demand prices). Amazon provide Reserved Instances to try to address this always-on option, but the cost savings of “nearly 50%” assume the instance would always be running at On-Demand costs – so you’re paying for 50% of four times as many hours at On-Demand prices. This doesn’t really compute.

In reality, it may not be possible to run instances for only 40 hours/week, but it should be possible to run them for less than 50 hours/week for most users, with the right controls in place, and this figure could be a lot less if instances aren’t used every day.

So the question becomes how usage can be controlled without disrupting the value of the service. Too much control and the service becomes an obstacle to delivery. Too little control and the accounting department will be most displeased.

At a high level, these are the options we considered (with some bad options thrown in to illustrate the point):

- Get a reporting tool that will expose usage patterns on an individual and team level.

- Potentially bill teams for usage.

- Potentially bill clients for usage (trickier).

- Potentially set up a scheduled task that will automatically shut down an instance eight (or nine, or ten) hours after it is launched. Train users how to cancel the shutdown when they will be working late. While this solution is quite inelegant, it might work – depending on the users and their usage patterns.

- Use LabSlice (or a similar tool) to allow users to turn machines on and off, but not to create images or provision new machines. Set up policies to automatically shut down machines after a specific amount of running time.

- Get Draconian and have managers/administrators enforce shut down at the end of the day. Keep in mind, this is likely to taint any positives associated with this service and could prove very difficult to implement if users have valid reasons to leave machines on periodically. Is the enforcer really going to understand these nuances? In short, I suspect this won’t fit the culture of most businesses.

Remember, the point of all of this is to achieve the lowest cost, as the service will probably only be affordable with these controls in place. Without a mechanism to ensure machines are turned off, the business is exposed to 24-hour usage costs. I will give examples of projected costs without these controls later.

Back to the Public IP Addresses

Update 17 March 2011: the information regarding the public IP addresses and the VPC below is now out of date. Please see my follow-up post on Amazon VPC and VM Import Updates for more information.

If you recall from the last post, I mentioned that new public IP addresses are generated for instances whenever they are started up (unless the VPC is being used, in which case there is no public IP address). One of the features that you’ll want to find in your management tool is the ability to connect to instances after users have started them up. This environment isn’t going to work very well unless users can find out their new IP address every morning. As I mentioned before, this could also probably be scripted and is likely to form a part of other tools besides LabSlice. The point of reiterating this now is that it’s key functionality in a management tool and it will probably be very messy getting by without it.

Reporting

The last benefit of a good administration tool is reporting. If users are routinely forgetting to turn machines off, you want to know about it. If users aren’t using this system you probably want to know about it too. How are they circumventing this approach, and why?

I don’t think delegation can work without the reporting element, unless shut down policies are very effective and don’t cause disruption by terminating active sessions. Keep in mind that accountability is much less of a problem when clear, quantifiable costs can be attributed to actions. I think the ideal balance is probably high visibility of reports and delegation of start/stop functionality, potentially coupled with liberal shut down policies – perhaps at 12 hours of usage. Lastly, it should be clear that any of these approaches would need to be piloted.

Licensing

As mentioned in the first post in this series, Windows license costs are built in to instances and Amazon charges for instances based on the type of license they provide. The only license that must be paid for from Amazon is this Windows license and it is built in to the Pay-As-You-Go instance costs. If the instances are used for development then MSDN/Technet or other purchased licenses can be used in these environments for all licenses other than Windows, so long as the type of use is compliant.

Amazon offer an image with SQL built in to it. You will probably want to avoid use of this instance if you already have a SQL license, as it is considerably more expensive to run. The cost of a large Windows Server 2008 instance increases from $.48/hour to $1.08/hour accordingly. This is huge even if these numbers look small. There are 26,280 hours in three years. That’s more than $5,000 more expensive per-year (per-instance).

One thing that looked promising (until we realised it was only open to users in America) was the “Bring Your Own License” pilot. The program seems to be closed now, but I imagine this option would be interesting for readers of this blog, should that program ever form a core part of the offering, internationally. This of course assumes that subtracting Windows license costs from the instance charges results in a significant saving.

Recommendations

The main contentious issues for which there is no clear, one-size fits all guidance are topology, network configuration and management. We were looking at an all-in-one server, including the DC/DNS roles, on private and public dynamic IP addresses with considerable piloting in this configuration, supported by LabSlice. The costs of the management tool are going to be insignificant relative to what it saves you, even if you write it yourself. I consider it to be fairly indispensable, with the possible exception of Reserved Instances at the three-years up-front cost of $1400/instance (plus usage). I will explore these cost specifics in greater detail in my next post.

5 thoughts on “SharePoint 2010 Infrastructure for Amazon EC2 Part III: Administration, Delegation and Licensing”